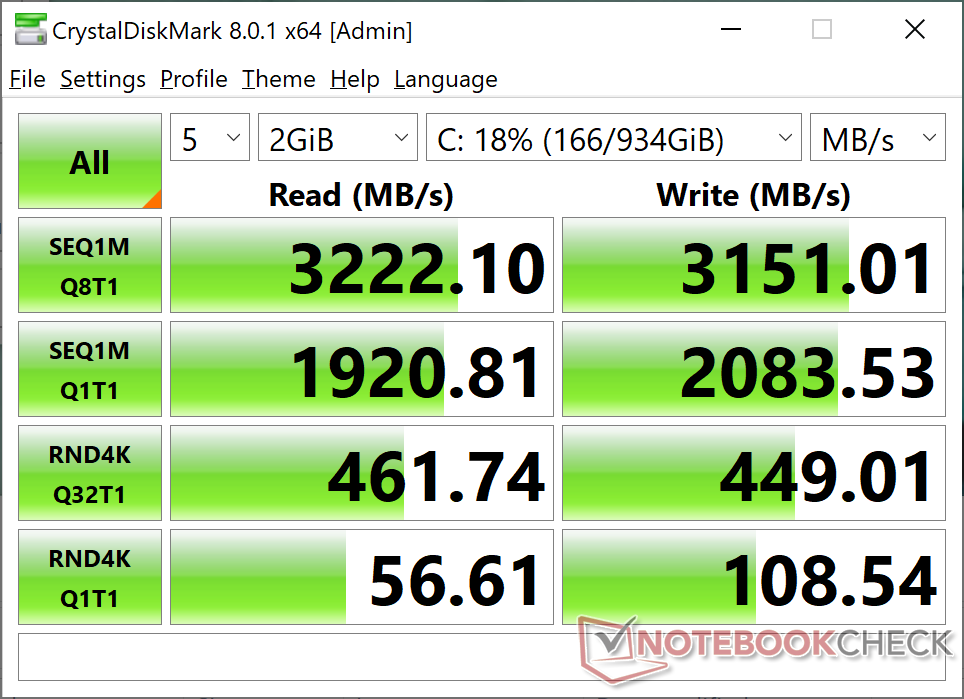

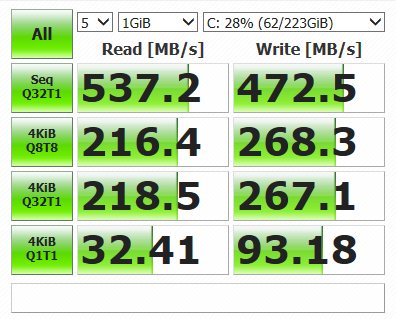

If you're in need of high-capacity, enterprise-class solid state storage, with full power loss protection and a solid warranty, the Kingston DC1000M series is worth a look, especially if your workloads are aligned with its particular strengths.Don't seem to be any fast results, so thought I would post mine as an fyi. Although we don't have any of the data graphed, the NVMe-based Kingston DC1000M drives are a significant, across-the-board upgrade over legacy SATA-based solutions. Looking back through the numbers though, the drives seem best suited to heavier sequential transfers at higher queue depths, where it typically led the Intel and Samsung drives. General availability of the Kingston DC1000M drives is still ramping up, however, so we expect pricing to trickle south over the coming weeks. That makes the Kingston drive's price somewhat higher than the Samsung and Intel drives we tested, which are available for about $0.21 and $0.23, respectively. Kingston DC1000M series drives are currently on-sale for about $0.26 per gigabyte (the 3.84TB model we tested can be found for $1,062 at the moment). Kingston Solid State Drives - Find Them At Amazon Random 4K transfers fall about in the middle of the pack versus the particular Samsung and Intel drives we tested, but were typically stronger in terms of writes. In our testing, the drive actually exceeded those numbers on more than one occasion, which resulted in clear wins on a couple of the sequential-focused benchmarks (like ATTO, SANDRA, and CrystalDiskMark). Kingston DCM1000M Performance Summary: Kingston rates the 3.84TB DC1000M drive we tested for up to 3.1GB/s reads with up to 2.7GB/s writes.

Access latency is still low relatively to legacy interfaces / drives, but versus the Samsung and Intel NVMe drives we tested, the DC1000M ends up falling behind a bit. While under load, access latency increases nearly across the board with all drives and at every queue depth, but the Kingston drive's performance took the largest hits overall. A sustained sequential write across the entire volume was initiated, and when performance plateaued, access latency was measured with IOMeter.

For this next set of tests, we measured access latency at various queue depths with the same fully random IOmeter 4K access pattern (67% reads, 33% writes), while each of the drives was also under a sustained sequential write workload.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed